When you have a tool that supports emulation on a host system, one of the common problems that you run in to is the resulting project does not look the same on the target hardware. An example of this is when you work with a bit depth of 32 on your host system and then run tour storyboard application on a target system that only supports 16 bits per pixel. Why is this an issue? Well it's because you go from having millions of colors at your disposal to 65536. This can cause problems like color banding in gradients.

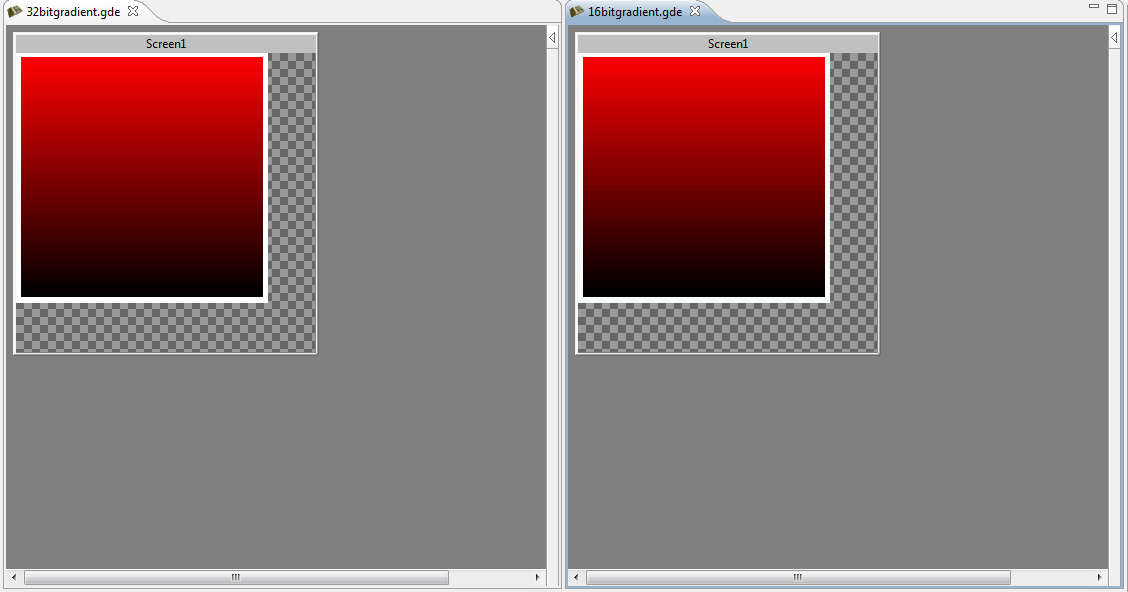

We have discussed how we can make users aware of issues such of these before they get to the point where they are running an storyboard application on their target hardware, and one solution is to render things in the tool with a bit depth of 16. Of course this would only happen if you set your storyboard application to have a bit depth of 16. Otherwise we would render stuff with 32 bits per pixel. The following is an example of what the comparison looks like:

Of course now, people may now believe that our tool doesn't render correctly as opposed to our runtime, but as we move along, the tool can make it apparent to the end user why this is happening.

- Rodney

.png?width=180&height=67&name=Crank-AMETEK-HZ-Rev%20(4).png)