Many people are building embedded devices that increasingly incorporate some measure of artificial intelligence (AI) into their products. An area of particular interest is in the user experience (UX) where AI does a great job of creating a thoughtful and intuitive interface.

Much embedded AI activity to date has been focused around digital voice assistants and their ability to add natural dialog to our devices. While we’ve written before about how Google Assistant and Amazon Alexa voice integrations are a critical part of next gen devices, this is quickly becoming an expectation rather than a differentiation.

A more lasting UX improvement on your embedded GUI can come from adding a custom AI that leverages your company’s area of expertise. While this requires a bit of data science expertise, it’s the best way to add industry-specific value that’s difficult to replicate.

How your embedded GUI could benefit by using artificial intelligence

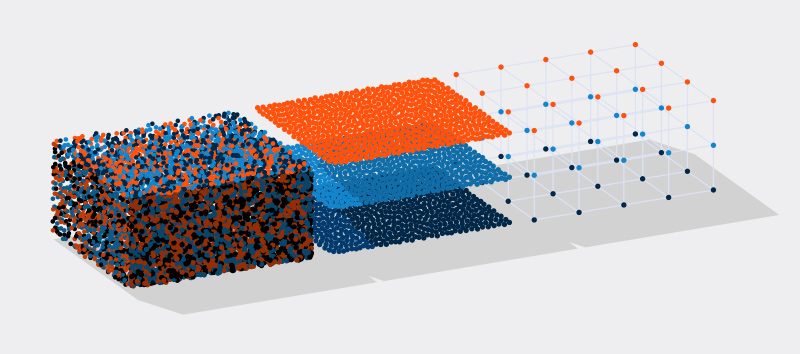

AI is powered by machine learning that needs to be trained with massive amounts of data. Hence, areas of your business where you currently have or could collect bulk data – product usage, sensor inputs, and diagnostic logs – are natural places where an AI could make a difference.

Let’s take a look at some example UI capabilities where AI could add value to your product.

Vison processing

Allowing a device to see gives it an extra sense to interact with a user. Combined with an inexpensive camera sensor, a bit of AI can perform magic:

- Tagging family members in smart homes for personalized settings

- Analyzing medical scans and flagging troublesome conditions

- Recognizing fingerprints for devices requiring authorized use

- Understanding handwriting in ATMs or document scanners

Pattern recognition

A properly trained AI can become very good at recognizing patterns hiding in data in anything from seismic analyzers to self-driving cars. By sifting through noisy and quiet signals, AI can auto-classify important data, simplify inputs to other systems, or even mimic human expertise.

What data do these pattern-recognizing AIs interpret? Often it’s basic sensor readings – temperature, location, orientation, atmospheric pressure, radar, or infrared – used in a variety of industrial, medical, and consumer electronics applications. However, another rich area of data is the user’s behaviour: how they use the device and operate it. An AI-enabled device can learn their particular owner’s habits, allowing a smart device to automatically guess its owner’s intent and conform to their preferences. These subtle improvements can make a substantial difference to the UI and UX.

Natural language processing

Natural language processing (NLP) is the ability for devices to understand the intent behind human language. Voice assistants typically use NLP to imbue meaning into the text transcriptions they create from audio speech, however NLP can also interpret text for other applications:

- Locating street addresses for navigation and logistics solutions

- Providing chatbots for on-device support

- Scanning speech-to-text requests sent to IoT and smart home devices

- Interpreting scheduled times or locations in free-form emails

Automation

Computers have always been excellent at automation. However, they aren’t as good at performing tasks where each occurrence isn’t always identical. This is where an AI comes in; it can give a computer the ability to perform rapid, high volume, or highly repetitious tasks in a way that adapts to changing circumstances.

AI-enabled automation is the essence behind self-driving cars. The driving task is both mind-numbingly repetitive yet is also constantly different and requiring rapid adaptation – a situation ideal for a smart computer to manage. Process automation is similar, allowing industrial robots to become intelligent about what they are programmed to do and achieve it flexibly. By focusing the robot more on what it needs to do rather than how to do it, the UI for automating it can be dramatically simplified.

How to design artificial intelligence into your embedded GUI

Now that we’ve covered what your device might do with an AI, how do you design your user interface around it? Very few resources discuss how the addition of intelligence should impact your UI design. One person who does is senior UX designer Naïma van Esch, and while her recommendations are consumer device specific, they are generically useful for embedded systems too:

- Manage expectations. Set the user’s expectation about what the device can and cannot do so they’re not disappointed. Misaligned expectations may cause users to use the product unexpectedly, and you should be prepared for that.

- Design for forgiveness. The AI isn’t going to be perfect, so design the UI so the users are inclined to forgive it. That can include UI treatments like a friendly tone, delightful features, or functional aspects such as the ability to operate without connectivity.

- Data transparency and tailoring. Be transparent about the data you’re collecting on the user and offer them the ability to tailor it. That helps with privacy issues as well as concerns about big brother. But it also lets your users make the UX smarter if, for example, multiple people are sharing the UI unbeknownst to the device.

- Privacy, security, and control. Gain the user’s trust when using their personal data, and don’t do things without the user’s consent.

Using AI for embedded systems testing

Another way an AI can improve your product is hidden from the user and that’s by improving your UI testing. Using AI in your testing program won’t remove the need for human testers, but you can reduce the amount of tedium that comes with the job and reserve your human team for more creative tests. Some of the advantages of having your UI testing backed by an AI include:

- Improved confidence in reproducible regression testing

- More thorough tests that increase product quality

- Less boredom for human testers

Thankfully, there are many people tackling this problem, and there are now a whole lot of tools that can be applied in building a smart testing platform.

Get smart with AI

With all of this discussion, you’re probably itching to get started on an AI capability for your products. There are many pre-made tools for building an AI: TensorFlow, SageMaker Neo, and PyTorch are a few of the more popular. All of these resources include tutorials and a community of developers and data scientists supporting them. To build up a grounding in the data science needed, you might try a resource like Scikit, a Python toolkit that provides succinct visual explanations of each of the different mathematical models and when they’re best used.

And of course, if you want to explore how AI can help you create better a UI/UX, we’d love to talk!

.png?width=180&height=67&name=Crank-AMETEK-HZ-Rev%20(4).png)